TripAdvisor.com is one of the most popular service portals in the travel industry, containing data about trips, hotels and restaurants. In 2026, TripAdvisor's GraphQL endpoints remain the most efficient way to extract structured hotel and review data at scale.

In this tutorial, we'll take a look at how to scrape TripAdvisor reviews as well as other details like hotel information. We'll also automate finding hotel pages by scraping search. The concepts we'll explain can be applied to other parts of the website, such as restaurants, tours and activities.

Key Takeaways

Master tripadvisor scraper techniques using Python with httpx and parsel, extracting hotel data from GraphQL APIs and hidden web data for comprehensive travel information collection.

- Reverse engineer TripAdvisor's GraphQL search endpoints by intercepting browser network requests and analyzing payload structures

- Parse hidden web data from JavaScript variables using XPath selectors to extract hotel details and review information

- Implement GraphQL query replication with proper headers and request spacing to bypass anti-scraping measures

- Extract structured travel data including hotel names, prices, ratings, and detailed review content from JSON responses

- Handle dynamic content loading and pagination through concurrent request processing and response parsing

- Configure realistic browser headers and User-Agent rotation to avoid detection during large-scale data collection

Full Tripadvisor Scraper Code

Why Scrape TripAdvisor?

TripAdvisor is one of the most popular data sources in the travel industry. Most people are interested in scraping TripAdvisor reviews but this public source also contains data like the hotel, tour and restaurant information and pricing. So, by scraping TripAdvisor, we can gather information about the hotel industry as well as public opinions about it.

This data offers great value in the business intelligence areas, such as the market and competitive analysis. In other words, data available on TripAdvisor can give us a inisghts into the travel industry, which can be used to generate leads and improve business performances.

For more on scraping use cases see our extensive write-up Scraping Use Cases

Project Setup

To scrape TripAdvisor, we'll use a few Python packages:

- httpx - HTTP client library which will let us communicate with TripAdvisor.com's servers

- parsel - HTML parsing library we'll use to parse our scraped HTML files using web selectors, such as Parsing HTML with Xpath and Parsing HTML with CSS Selectors.

These packages can be easily installed via pip command:

$ pip install "httpx[http2,brotli]" parselAlternatively, you're free to swap httpx out with any other HTTP client package such as requests as we'll only need basic HTTP functions which are almost interchangeable in every library. As for, parsel, another great alternative is beautifulsoup package.

Finding Tripadvisor Hotels

Let's start our TripAdvisor scraper by taking a look at how can we find hotels on the website. For this, let's take a look at how TripAdvisor's search function works:

In the short video above, we can see that a GraphQl-powered POST request is sent in the background when we type in our search query. This request returns search page recommendations. Each of these recommendations contains preview data of hotels, restaurants or tours.

Let's replicate this graphql request in our Python-based scraper. We'll establish an HTTP connection session and submit a POST type request that mimics what we've observed above:

import asyncio

import json

import random

import string

from typing import List, TypedDict

import httpx

from loguru import logger as log

class LocationData(TypedDict):

"""result dataclass for tripadvisor location data"""

localizedName: str

url: str

HOTELS_URL: str

ATTRACTIONS_URL: str

RESTAURANTS_URL: str

placeType: str

latitude: float

longitude: float

async def scrape_location_data(query: str, client: httpx.AsyncClient) -> List[LocationData]:

"""

scrape search location data from a given query.

e.g. "New York" will return us TripAdvisor's location details for this query

"""

log.info(f"scraping location data: {query}")

# the graphql payload that defines our search

# note: that changing values outside of expected ranges can block the web scraper

payload = [

{

"variables": {

"request": {

"query": query,

"limit": 10,

"scope": "WORLDWIDE",

"locale": "en-US",

"scopeGeoId": 1,

"searchCenter": None,

# note: here you can expand to search for differents.

"types": [

"LOCATION",

# "QUERY_SUGGESTION",

# "RESCUE_RESULT"

],

"locationTypes": [

"GEO",

"AIRPORT",

"ACCOMMODATION",

"ATTRACTION",

"ATTRACTION_PRODUCT",

"EATERY",

"NEIGHBORHOOD",

"AIRLINE",

"SHOPPING",

"UNIVERSITY",

"GENERAL_HOSPITAL",

"PORT",

"FERRY",

"CORPORATION",

"VACATION_RENTAL",

"SHIP",

"CRUISE_LINE",

"CAR_RENTAL_OFFICE",

],

"userId": None,

"context": {},

"enabledFeatures": ["articles"],

"includeRecent": True,

}

},

# Every graphql query has a query ID that doesn't change often:

"query": "84b17ed122fbdbd4",

"extensions": {"preRegisteredQueryId": "84b17ed122fbdbd4"},

}

]

# we need to generate a random request ID for this request to succeed

random_request_id = "".join(

random.choice(string.ascii_lowercase + string.digits) for i in range(180)

)

headers = {

"X-Requested-By": random_request_id,

"Referer": "https://www.tripadvisor.com/Hotels",

"Origin": "https://www.tripadvisor.com",

}

result = await client.post(

url="https://www.tripadvisor.com/data/graphql/ids",

json=payload,

headers=headers,

)

data = json.loads(result.content)

results = data[0]["data"]["Typeahead_autocomplete"]["results"]

results = [r["details"] for r in results] # strip metadata

log.info(f"found {len(results)} results")

return results

# To avoid being instantly blocked we'll be using request headers that

# mimic Chrome browser on Windows

BASE_HEADERS = {

"authority": "www.tripadvisor.com",

"accept-language": "en-US,en;q=0.9",

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/96.0.4664.110 Safari/537.36",

"accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"accept-language": "en-US;en;q=0.9",

"accept-encoding": "gzip, deflate, br",

}

# start HTTP session client with our headers and HTTP2

client = httpx.AsyncClient(

http2=True, # http2 connections are significantly less likely to get blocked

headers=BASE_HEADERS,

timeout=httpx.Timeout(150.0),

limits=httpx.Limits(max_connections=5),

)

async def run():

result = await scrape_location_data("Malta", client)

print(json.dumps(result, indent=2))

if __name__ == "__main__":

asyncio.run(run())This graphQL request might appear complicated, but we mostly use values copied from our browser. Let's note a few points used in the above code:

- The headers

RefererandOriginare required to not be blocked by TripAdvisor - The header

X-Requested-Byis a tracking ID header and in this case, we just generate a bunch of random numbers.

We're also using httpx with http2 enabled to make our requests faster and less likely to get blocked.

Let's run our TripAdvisor scraper and see what it finds for "Malta" keyword:

Example Output

{

"localizedName": "Malta",

"localizedAdditionalNames": {

"longOnlyHierarchy": "Europe"

},

"streetAddress": {

"street1": null

},

"locationV2": {

"placeType": "COUNTRY",

"names": {

"longOnlyHierarchyTypeaheadV2": "Europe"

},

"vacationRentalsRoute": {

"url": "/VacationRentals-g190311-Reviews-Malta-Vacation_Rentals.html"

}

},

"url": "/Tourism-g190311-Malta-Vacations.html",

"HOTELS_URL": "/Hotels-g190311-Malta-Hotels.html",

"ATTRACTIONS_URL": "/Attractions-g190311-Activities-Malta.html",

"RESTAURANTS_URL": "/Restaurants-g190311-Malta.html",

"placeType": "COUNTRY",

"latitude": 35.892,

"longitude": 14.42979,

"isGeo": true,

"thumbnail": {

"photoSizeDynamic": {

"maxWidth": 2880,

"maxHeight": 1920,

"urlTemplate": "https://dynamic-media-cdn.tripadvisor.com/media/photo-o/21/66/c5/99/caption.jpg?w={width}&h={height}&s=1&cx=1203&cy=677&chk=v1_cf397a9cdb4fbd9239a9"

}

}

}We can see that we get URLs to Hotel, Restaurant and Attraction searches! We can use these URLs to scrape search results themselves.

Scraping Tripadvisor Search

We figured out how to use TripAdvisor's Search suggestions to find search pages, now let's scrape these pages for hotel preview data like links and names.

Let's take a look at how we can do that by extending our scraping code:

import asyncio

import json

import math

from typing import List, Optional, TypedDict

from urllib.parse import urljoin

import httpx

from loguru import logger as log

from parsel import Selector

from snippet1 import scrape_location_data, client

class Preview(TypedDict):

url: str

name: str

def parse_search_page(response: httpx.Response) -> List[Preview]:

"""parse result previews from TripAdvisor search page"""

log.info(f"parsing search page: {response.url}")

parsed = []

# Search results are contain in boxes which can be in two locations.

# this is location #1:

selector = Selector(response.text)

for box in selector.css("span.listItem"):

title = box.css("div[data-automation=hotel-card-title] a ::text").getall()[1]

url = box.css("div[data-automation=hotel-card-title] a::attr(href)").get()

parsed.append(

{

"url": urljoin(str(response.url), url), # turn url absolute

"name": title,

}

)

if parsed:

return parsed

# location #2

for box in selector.css("div.listing_title>a"):

parsed.append(

{

"url": urljoin(

str(response.url), box.xpath("@href").get()

), # turn url absolute

"name": box.xpath("text()").get("").split(". ")[-1],

}

)

return parsed

async def scrape_search(query: str, max_pages: Optional[int] = None) -> List[Preview]:

"""scrape search results of a search query"""

# first scrape location data and the first page of results

log.info(f"{query}: scraping first search results page")

try:

location_data = (await scrape_location_data(query, client))[0] # take first result

except IndexError:

log.error(f"could not find location data for query {query}")

return

hotel_search_url = "https://www.tripadvisor.com" + location_data["HOTELS_URL"]

log.info(f"found hotel search url: {hotel_search_url}")

first_page = await client.get(hotel_search_url)

assert first_page.status_code == 200, "scraper is being blocked"

# parse first page

results = parse_search_page(first_page)

if not results:

log.error("query {} found no results", query)

return []

# extract pagination metadata to scrape all pages concurrently

page_size = len(results)

total_results = first_page.selector.xpath("//span/text()").re(

"(\d*\,*\d+) properties"

)[0]

total_results = int(total_results.replace(",", ""))

next_page_url = first_page.selector.css(

'a[aria-label="Next page"]::attr(href)'

).get()

next_page_url = urljoin(hotel_search_url, next_page_url) # turn url absolute

total_pages = int(math.ceil(total_results / page_size))

if max_pages and total_pages > max_pages:

log.debug(

f"{query}: only scraping {max_pages} max pages from {total_pages} total"

)

total_pages = max_pages

# scrape remaining pages

log.info(

f"{query}: found {total_results=}, {page_size=}. Scraping {total_pages} pagination pages"

)

other_page_urls = [

# note: "oa" stands for "offset anchors"

next_page_url.replace(f"oa{page_size}", f"oa{page_size * i}")

for i in range(1, total_pages)

]

# we use assert to ensure that we don't accidentally produce duplicates which means something went wrong

assert len(set(other_page_urls)) == len(other_page_urls)

to_scrape = [client.get(url) for url in other_page_urls]

for response in asyncio.as_completed(to_scrape):

results.extend(parse_search_page(await response))

return results

# example use:

if __name__ == "__main__":

async def run():

result = await scrape_search("Malta", client)

print(json.dumps(result, indent=2))

asyncio.run(run())Example Output

[

"id": "573828",

"url": "/Hotel_Review-g230152-d573828-Reviews-Radisson_Blu_Resort_Spa_Malta_Golden_Sands-Mellieha_Island_of_Malta.html",

"name": "Radisson Blu Resort & Spa, Malta Golden Sands"

},

...

]Here, we create our scrape_search() function that takes in a query and finds the correct search page. Then, we scrape the whole search page, which contains multiple paginated pages.

With preview results in hand, we can scrape information, pricing and review data of each TripAdvisor hotel listing - let's do that in the following section.

Scraping Tripadvisor Hotel Data

To scrape hotel information we'll have to collect each hotel page we found using the search.

Before we start scraping though, let's take a look at the individual hotel page to see where is the data located in the hotel page itself.

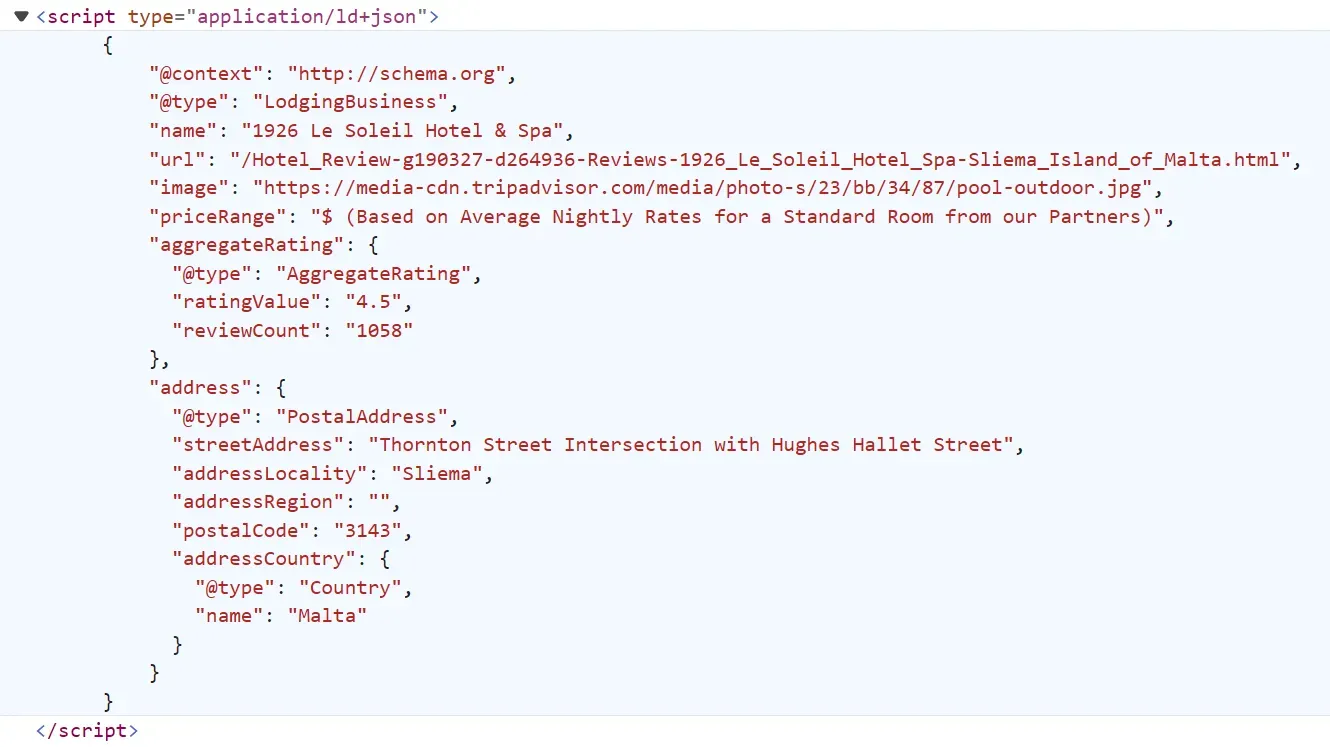

For example, let's see this 1926 Hotel & Spa hotel. If we take a look at the page source of this page in our browser we can see a few amount of JavaScript cache data:

This data is the same on the page but before rendering into the HTML, often knnown as hidden web data.

Let's scrape Triadvisor hotels data by extracting this hidden data alongside other data on the page HTML:

import asyncio

import json

import math

from typing import List, Dict, Optional

from httpx import AsyncClient, Response

from parsel import Selector

client = AsyncClient(

headers={

"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.114 Safari/537.36",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9",

"Accept-Language": "en-US,en;q=0.9",

},

follow_redirects=True,

timeout=15.0

)

def parse_hotel_page(result: Response) -> Dict:

"""parse hotel data from hotel pages"""

selector = Selector(result.text)

basic_data = json.loads(selector.xpath("//script[contains(text(),'aggregateRating')]/text()").get())

description = selector.css("div.fIrGe._T::text").get()

amenities = []

for feature in selector.xpath("//div[contains(@data-test-target, 'amenity')]/text()"):

amenities.append(feature.get())

return {

"basic_data": basic_data,

"description": description,

"featues": amenities

}

async def scrape_hotel(url: str) -> Dict:

"""Scrape hotel data and reviews"""

first_page = await client.get(url)

assert first_page.status_code == 403, "request is blocked"

hotel_data = parse_hotel_page(first_page)

print(f"scraped one hotel data with")

return hotel_dataRun the code

async def run():

hotel_data = await scrape_hotel(

url="https://www.tripadvisor.com/Hotel_Review-g190327-d264936-Reviews-1926_Hotel_Spa-Sliema_Island_of_Malta.html"

)

# print the result in JSON format

print(json.dumps(hotel_data, indent=2))

if __name__ == "__main__":

asyncio.run(run())In the above code, we start by inititalizing an httpx client with basic headers and define two functions:

parse_hotel_page: For parsing the hotel data from the HTML using selectors.

scrape_hotel: For scraping Tripadvisor hotel pages by sending requests the hotel page URL and then parsing the HTML.

Here is the result we got:

Output

{

"basic_data": {

"@context": "http://schema.org",

"@type": "LodgingBusiness",

"name": "1926 Le Soleil Hotel & Spa",

"url": "/Hotel_Review-g190327-d264936-Reviews-1926_Le_Soleil_Hotel_Spa-Sliema_Island_of_Malta.html",

"image": "https://media-cdn.tripadvisor.com/media/photo-s/23/bb/34/87/pool-outdoor.jpg",

"priceRange": "$ (Based on Average Nightly Rates for a Standard Room from our Partners)",

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.5",

"reviewCount": "1058"

},

"address": {

"@type": "PostalAddress",

"streetAddress": "Thornton Street Intersection with Hughes Hallet Street",

"addressLocality": "Sliema",

"addressRegion": "",

"postalCode": "3143",

"addressCountry": {

"@type": "Country",

"name": "Malta"

}

}

},

"description": "Inspired by the life and passions of one man and featuring a touch of the roaring twenties, 1926 Le Soleil Hotel & Spa offers luxurious rooms and suites in the central city of Sliema. The hotel is located 200 meters from the seafront and also offers a splendid 1926 La Plage Beach Club on the water�s edge as well as a luxury SPA. The beach club is located 200 meters away from the hotel and is a seasonal operation. Our concept of �Lean Luxury� includes the following: � Luxury rooms at affordable prices � Uncomplicated comfort and a great sleep � Smart design technology � Raindance showerheads � Flat screens � SuitePad Tablets � Self check in and check out (if desired) � Coffee & tea making facilities",

"featues": [

"Free public parking nearby",

"Free internet",

"Pool",

"Fitness Center with Gym / Workout Room",

"Bar / lounge",

"Airport transportation",

"Meeting rooms",

"Spa",

"Paid private parking nearby",

"Street parking",

"Wifi",

"Pool / beach towels",

"Infinity pool",

"Pool with view",

"Outdoor pool",

"Heated pool",

"Saltwater pool",

"Shallow end in pool",

"Fitness / spa locker rooms",

"Sauna",

"Coffee shop",

"Restaurant",

"Breakfast available",

"Breakfast buffet",

"Complimentary Instant Coffee",

"Complimentary tea",

"Complimentary welcome drink",

"Outdoor dining area",

"Vending machine",

"Poolside bar",

"Taxi service",

"Steam room",

"24-hour security",

"Baggage storage",

"Sun deck",

"Sun loungers / beach chairs",

"Sun terrace",

"Doorperson",

"First aid kit",

"Umbrella",

"24-hour check-in",

"24-hour front desk",

"Express check-in / check-out",

"Dry cleaning",

"Laundry service",

"Ironing service",

"Shoeshine",

"Bathrobes",

"Air conditioning",

"Desk",

"Housekeeping",

"Interconnected rooms available",

"Refrigerator",

"Cable / satellite TV",

"Walk-in shower",

"Telephone",

"Wardrobe / closet",

"Bottled water",

"Private bathrooms",

"Tile / marble floor",

"Wake-up service / alarm clock",

"Flatscreen TV",

"Hair dryer",

"Non-smoking rooms"

]

}Our TripAdvisor scraper got the essential hotel data. However, we are missing the hotel reviews data - let's scrape them next!

Scraping Tripadvisor Hotel Reviews

Reviews data are found on the same hotel page. We'll extend our parse_hotel_page function to capture this data. And since we have the total number of reviews, we'll use it get the total number of review pages and crawl over them. Let's apply this within our previous Tripadvisor scraper code:

import asyncio

import json

import math

from typing import List, Dict, Optional

from httpx import AsyncClient, Response

from parsel import Selector

client = AsyncClient(

headers={

# use same headers as a popular web browser (Chrome on Windows in this case)

"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.114 Safari/537.36",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9",

"Accept-Language": "en-US,en;q=0.9",

},

follow_redirects=True

)

def parse_hotel_page(result: Response) -> Dict:

"""parse hotel data from hotel pages"""

selector = Selector(result.text)

basic_data = json.loads(selector.xpath("//script[contains(text(),'aggregateRating')]/text()").get())

description = selector.css("div.fIrGe._T::text").get()

amenities = []

for feature in selector.xpath("//div[contains(@data-test-target, 'amenity')]/text()"):

amenities.append(feature.get())

reviews = []

for review in selector.xpath("//div[@data-reviewid]"):

title = review.xpath(".//div[@data-test-target='review-title']/a/span/span/text()").get()

text = "".join(review.xpath(".//span[contains(@data-automation, 'reviewText')]/span/text()").extract())

rate = review.xpath(".//div[@data-test-target='review-rating']/span/@class").get()

rate = (int(rate.split("ui_bubble_rating")[-1].split("_")[-1].replace("0", ""))) if rate else None

trip_data = review.xpath(".//span[span[contains(text(),'Date of stay')]]/text()").get()

reviews.append({

"title": title,

"text": text,

"rate": rate,

"tripDate": trip_data

})

return {

"basic_data": basic_data,

"description": description,

"featues": amenities,

"reviews": reviews

}

async def scrape_hotel(url: str, max_review_pages: Optional[int] = None) -> Dict:

"""Scrape hotel data and reviews"""

first_page = await client.get(url)

assert first_page.status_code == 403, "request is blocked"

hotel_data = parse_hotel_page(first_page)

# get the number of total review pages

_review_page_size = 10

total_reviews = int(hotel_data["basic_data"]["aggregateRating"]["reviewCount"])

total_review_pages = math.ceil(total_reviews / _review_page_size)

# get the number of review pages to scrape

if max_review_pages and max_review_pages < total_review_pages:

total_review_pages = max_review_pages

# scrape all review pages concurrently

review_urls = [

# note: "or" stands for "offset reviews"

url.replace("-Reviews-", f"-Reviews-or{_review_page_size * i}-")

for i in range(1, total_review_pages)

]

for response in asyncio.as_completed(review_urls):

data = parse_hotel_page(await response)

hotel_data["reviews"].extend(data["reviews"])

print(f"scraped one hotel data with {len(hotel_data['reviews'])} reviews")

return hotel_dataRun the code

async def run():

hotel_data = await scrape_hotel(

url="https://www.tripadvisor.com/Hotel_Review-g190327-d264936-Reviews-1926_Hotel_Spa-Sliema_Island_of_Malta.html" ,

max_review_pages=3,

)

# print the result in JSON format

print(json.dumps(hotel_data, indent=2))

if __name__ == "__main__":

asyncio.run(run())Here, we add the reviews parsing logic to the parse_hotel_page funciton to get all the reviews on each page. Next, we update the scrape_hotel function by adding three additional steps. First, it gets the number of review pages available and the actual review page to scrape. Next, it adds the review page URLs to a scraping list. Finally, it scrapes the remaining review pages concurrently. Here is an illustration of how this pagination log works:

The data we got is the same as the hotel data we got earlier, but with additional review data:

Output

{

"basic_data": {

"@context": "http://schema.org",

"@type": "LodgingBusiness",

"name": "1926 Le Soleil Hotel & Spa",

"url": "/Hotel_Review-g190327-d264936-Reviews-1926_Le_Soleil_Hotel_Spa-Sliema_Island_of_Malta.html",

"image": "https://media-cdn.tripadvisor.com/media/photo-s/23/bb/34/87/pool-outdoor.jpg",

"priceRange": "$ (Based on Average Nightly Rates for a Standard Room from our Partners)",

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.5",

"reviewCount": "1058"

},

"address": {

"@type": "PostalAddress",

"streetAddress": "Thornton Street Intersection with Hughes Hallet Street",

"addressLocality": "Sliema",

"addressRegion": "",

"postalCode": "3143",

"addressCountry": {

"@type": "Country",

"name": "Malta"

}

}

},

"description": "Inspired by the life and passions of one man and featuring a touch of the roaring twenties, 1926 Le Soleil Hotel & Spa offers luxurious rooms and suites in the central city of Sliema. The hotel is located 200 meters from the seafront and also offers a splendid 1926 La Plage Beach Club on the water�s edge as well as a luxury SPA. The beach club is located 200 meters away from the hotel and is a seasonal operation. Our concept of �Lean Luxury� includes the following: � Luxury rooms at affordable prices � Uncomplicated comfort and a great sleep � Smart design technology � Raindance showerheads � Flat screens � SuitePad Tablets � Self check in and check out (if desired) � Coffee & tea making facilities",

"featues": [

"Free public parking nearby",

"Free internet",

"Pool",

"Fitness Center with Gym / Workout Room",

"Bar / lounge",

"Airport transportation",

"Meeting rooms",

"Spa",

"Paid private parking nearby",

"Street parking",

"Wifi",

"Pool / beach towels",

"Infinity pool",

"Pool with view",

"Outdoor pool",

"Heated pool",

"Saltwater pool",

"Shallow end in pool",

"Fitness / spa locker rooms",

"Sauna",

"Coffee shop",

"Restaurant",

"Breakfast available",

"Breakfast buffet",

"Complimentary Instant Coffee",

"Complimentary tea",

"Complimentary welcome drink",

"Outdoor dining area",

"Vending machine",

"Poolside bar",

"Taxi service",

"Steam room",

"24-hour security",

"Baggage storage",

"Sun deck",

"Sun loungers / beach chairs",

"Sun terrace",

"Doorperson",

"First aid kit",

"Umbrella",

"24-hour check-in",

"24-hour front desk",

"Express check-in / check-out",

"Dry cleaning",

"Laundry service",

"Ironing service",

"Shoeshine",

"Bathrobes",

"Air conditioning",

"Desk",

"Housekeeping",

"Interconnected rooms available",

"Refrigerator",

"Cable / satellite TV",

"Walk-in shower",

"Telephone",

"Wardrobe / closet",

"Bottled water",

"Private bathrooms",

"Tile / marble floor",

"Wake-up service / alarm clock",

"Flatscreen TV",

"Hair dryer",

"Non-smoking rooms"

],

"reviews": [

{

"title": "Wonderful Stay!",

"text": "Hotel was amazing! Couldnt have asked for a better getaway especially during Winter! Shoutout to Lily for always helping and also the entire restaurant/bar group of staff (i mean every single one of them!). They were ALL so welcoming and attentive with smiles on their faces at all times! Upon arrival we were given the choice of Mulled Wine or Orange Infused Water - a nice touch! Spa: Spa was open from 9am - 9pm i believe and no slot booking was required! Spa pool is heated and has jets. There is a sauna and steam room and 2 heated loungers you can lay on. Heated loungers also had cleaning spray next to them so you could wipe down the loungers before laying on them. Robes and slippers are provided in your rooms - can use to go to spa in. Breakfast: Breakfast was amazing! A",

"rate": 5,

"tripDate": " December 2023"

},

{

"title": "Special Place to Getaway from UK Winter",

"text": "For the time of year and price reflective of this I thought it was 5/5 lovely stylish hotel lobby with tall Christmas tree, piano and g/father clock and searing area. Check in was welcoming and helpful as arrived early and luggage was looked after while we all had a look around Sliema, hotel very close to waterfront restaurants and shops. The rooms on 3rd floor were clean and comfortable, balcony was looking to side street we didn't utilise as went out and about every day. Switch besides bed for do not disturb or make-up my room signs outside and code for door I thought were brilliant. Housekeeping team great. Thanks Maricel. Kind helpful receptionists Lily and Abelmy. Food good at breakfast with additions like chia pudding, overnight oats, fresh fruits, salad, eggs, cereal,",

"rate": 5,

"tripDate": " November 2023"

},

{

"title": "Poor Experience - There are better choices in Malta!!",

"text": "This hotel left much to be desired. Throughout my stay, the service quality was consistently disappointing. From check-in to check-out, the experience was subpar. I wouldn�t recommend it, particularly for solo travelers, as it seemed poorly managed and gave off the impression of taking advantage of tourists. Issues ranged from inefficient processes to lackluster customer support. Overall, a regrettable choice for accommodation",

"rate": 1,

"tripDate": " November 2023"

},

{

"title": "FANTASTIC hotel - would definitely recommend",

"text": "This hotel was fantastic. We had a city facing room, which was modern, spacious and clean. The room has a small balcony which has 2 chairs. The bathroom is very clean as well. We had breakfast included which was definitely worth it. The beach club was open for 2 of the days during our stay and it had a great atmosphere and picturesque view.. The spa was amazing as well. Would definitely recommend and we will be back. The location is great - walking distance from shops, the bus route, the sliema ferry terminal and many restaurants.",

"rate": 5,

"tripDate": " November 2023"

},

{

"title": "Classy hotel with great staff",

"text": "Really lovely hotel with very friendly and helpful staff. We had a suite with a great view of the sea. Everything is done with class, from the foyer to the bar and restaurant. Prosecco at check in is a nice touch. Breakfast buffet very good, though could do more veggie options. The spa pool and sauna is a very relaxing space. The hotel has a separate area on the sea front with a pool, bar and restaurant, which is great though it closed abruptly midweek while we were there. They also put on a free tour to Mdina in a vintage bus, which was great and a nice touch. Sliema is very built up but there's a lovely promenade walk and you're never far from restaurants and shops. There's a quick ferry to Valetta, which is definitely worth a visit. Really nice stay, and again, the staff",

"rate": 4,

"tripDate": " November 2023"

}

....

]

}With this final feature, we have our full TripAdvisor scraper ready to scrape hotel information and reviews. We can easily apply the same scraping logic to scrape other TripAdvisor details like activities and restaurant data, as the underlying web technology is the same.

However, to successfully scrape TripAdvisor at scale we need to enhance our scraper to avoid blocking and captchas. For that, let's take a look at ScrapFly web scraping API service, which can easily allow us to achieve this by adding a few minor modifications to our scraper code.

Bypass Tripadvisor Blocking with Scrapfly

Scraping TripAdvisor.com data doesn't seem to be too difficult. However, our scraper is very likely to get blocked or requested to solve captchas when scraping at scale, resisting our web scraping process.

Check out Scrapfly's web scraping API for all the details.

For scraping tripadvisor using scrapfly, we'll be using scrapfly-sdk python package. First, let's install scrapfly-sdk using pip:

$ pip install scrapfly-sdkTo take advantage of ScrapFly's API in our TripAdvisor web scraper all we need to do is modify our httpx session code with the scrapfly-sdk client requests:

from scrapfly import ScrapflyClient, ScrapeConfig

client = ScrapflyClient(key="Your ScrapFly API key")

result = client.scrape(ScrapeConfig(

url="some tripadvisor URL",

asp=True, # enable Anti Scraping Protection

country="US", # select a specific country location

render_js=True # enable JavaScript rendering if needed, similar to headless browsers

))

html = result.content # get the page HTML

selector = result.selector # use the built-in parsel selectorFull Tripadvisor Scraper Code

FAQ

Is it legal to scrape tripadvisor.com?

Yes. TripAdvisor's data is publicly available, and we're not extracting anything personal or private. Scraping tripadvisor.com at slow, respectful rates would fall under the ethical scraping definition. That being said, for scraping reviews we should avoid collecting personal information such as users' names in GDRP-compliant countries (like the EU). For more, see our Is Web Scraping Legal? article.

Why scrape TripAdvisor instead of using TripAdvisor's API?

Unfortunately, TripAdvisor's API is difficult to use and very limited. For example, it provides only 3 reviews per location. By scraping public TripAdvisor pages we can collect all of the reviews and hotel details, which we couldn't get through TripAdvisor API otherwise.

What other travel and accommodation sites can I scrape?

Similar scraping techniques can be applied to other travel platforms like Booking.com, which offers hotel listings, pricing, and review data. These travel sites often use comparable web technologies, making the scraping approaches transferable across platforms.

TripAdvisor Scraping Summary

In this tutorial, we've taken a look at scraping Tripadvisor.com for hotel overview, review and pricing data. We've also explained how to discover hotel listings using Tripadvisor's search.

For our scraper, we used Python with popular community packages like httpx and parsel. To scrape tripadvisor we used the classic HTML parsing as well as modern hidden web data scraping techniques.

Finally, to avoid being blocked and to scale up our scraper we've taken a look at Scrapfly web scraping API through Scrapfly-SDK package.

Legal Disclaimer and Precautions

This tutorial covers popular web scraping techniques for education. Interacting with public servers requires diligence and respect:

- Do not scrape at rates that could damage the website.

- Do not scrape data that's not available publicly.

- Do not store PII of EU citizens protected by GDPR.

- Do not repurpose entire public datasets which can be illegal in some countries.

Scrapfly does not offer legal advice but these are good general rules to follow. For more you should consult a lawyer.